Travel blogging: Zürichberg

Saturday, July 11th, 2015 I was in Zurich last week where my hosts kindly took me to a very nice restaurant on Zürichberg, a hill that offers pretty views and a peaceful environment of fields and forests. In addition to the garden restaurant of the Hotel Zurichberg, there is a terrace as well with even better views. It turns out, Zürichberg is host to all sorts of attractions: FIFA’s headquarters, the Zurich Zoo and a beautiful cemetery where James Joyce is buried.

I was in Zurich last week where my hosts kindly took me to a very nice restaurant on Zürichberg, a hill that offers pretty views and a peaceful environment of fields and forests. In addition to the garden restaurant of the Hotel Zurichberg, there is a terrace as well with even better views. It turns out, Zürichberg is host to all sorts of attractions: FIFA’s headquarters, the Zurich Zoo and a beautiful cemetery where James Joyce is buried.

FIFA’s headquarters greet you with three flags, the middle one proudly proclaiming “My Game is Fair Play.” It’s good that they cleared that up. I was curious to see a sculpture peeking out from behind some trees, but as we tried to enter the FIFA grounds, a security guard stopped us explaining that unless we were children playing in the soccer match nearby or their parents, we could not proceed. Nearby was a guard with a weapon as well, not a common occurrence in Zurich.

FIFA’s headquarters greet you with three flags, the middle one proudly proclaiming “My Game is Fair Play.” It’s good that they cleared that up. I was curious to see a sculpture peeking out from behind some trees, but as we tried to enter the FIFA grounds, a security guard stopped us explaining that unless we were children playing in the soccer match nearby or their parents, we could not proceed. Nearby was a guard with a weapon as well, not a common occurrence in Zurich.

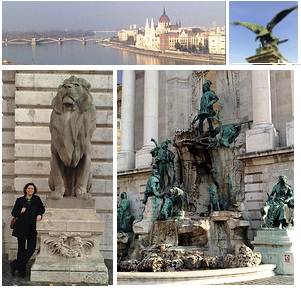

The highlight of this area for me was Friedhof Fluntern, a most charming cemetery, if that word is appropriate given the context (as aptly noted by a reviewer on TripAdvisor, “how do you rate a cemetery?”). Given the Swiss context, it is not a huge surprise that the grounds are very orderly. But there is more to it. It feels more like a garden than a cemetery. You can imagine spending time there to get away from the hustle and bustle of the city. A colleague even noted that he sometimes goes there to read. The headstones move past the usual venturing into the whimsy and artistic. The cemetery is on a hill, which adds to its character. I enjoyed going from row to row trying to peek into the lives of the people buried there through their names, the dates and notes on the stones, and the little sculptures honoring them. See more of my Friedhof Fluntern photos here.

The highlight of this area for me was Friedhof Fluntern, a most charming cemetery, if that word is appropriate given the context (as aptly noted by a reviewer on TripAdvisor, “how do you rate a cemetery?”). Given the Swiss context, it is not a huge surprise that the grounds are very orderly. But there is more to it. It feels more like a garden than a cemetery. You can imagine spending time there to get away from the hustle and bustle of the city. A colleague even noted that he sometimes goes there to read. The headstones move past the usual venturing into the whimsy and artistic. The cemetery is on a hill, which adds to its character. I enjoyed going from row to row trying to peek into the lives of the people buried there through their names, the dates and notes on the stones, and the little sculptures honoring them. See more of my Friedhof Fluntern photos here.

It was too hot to proceed to the Zoo, but having later read that they have Galapagos giant tortoises, I was bummed by my decision to skip it and will be sure to visit next time I am in town.

To get to Zürichberg, take Tram 6 from Central to Zoo, which is a 2-minute walk heading east from the main train station, which is ten minutes from the airport by train. Zurich offers day tickets for its entire public transportation system. The 24 or 72-hour ZürichCARD can also be very beneficial if you plan to visit numerous attractions.